Before You Start

Start from a Python>=3.8 environment with PyTorch>=1.7 installed. To install PyTorch see https://pytorch.org/get-started/locally/. To install YOLOv5 dependencies:

pip install -qr https://raw.githubusercontent.com/ultralytics/yolov5/master/requirements.txt # install dependencies

Model Description

YOLOv5 🚀 is a family of compound-scaled object detection models trained on the COCO dataset, and includes simple functionality for Test Time Augmentation (TTA), model ensembling, hyperparameter evolution, and export to ONNX, CoreML and TFLite.

| Model | size (pixels) |

mAPval 0.5:0.95 |

mAPtest 0.5:0.95 |

mAPval 0.5 |

Speed V100 (ms) |

params (M) |

FLOPS 640 (B) |

|

|---|---|---|---|---|---|---|---|---|

| YOLOv5s6 | 1280 | 43.3 | 43.3 | 61.9 | 4.3 | 12.7 | 17.4 | |

| YOLOv5m6 | 1280 | 50.5 | 50.5 | 68.7 | 8.4 | 35.9 | 52.4 | |

| YOLOv5l6 | 1280 | 53.4 | 53.4 | 71.1 | 12.3 | 77.2 | 117.7 | |

| YOLOv5x6 | 1280 | 54.4 | 54.4 | 72.0 | 22.4 | 141.8 | 222.9 | |

| YOLOv5x6 TTA | 1280 | 55.0 | 55.0 | 72.0 | 70.8 | - | - |

Table Notes (click to expand)

* APtest denotes COCO [test-dev2017](http://cocodataset.org/#upload) server results, all other AP results denote val2017 accuracy. * AP values are for single-model single-scale unless otherwise noted. **Reproduce mAP** by `python test.py --data coco.yaml --img 640 --conf 0.001 --iou 0.65` * SpeedGPU averaged over 5000 COCO val2017 images using a GCP [n1-standard-16](https://cloud.google.com/compute/docs/machine-types#n1_standard_machine_types) V100 instance, and includes FP16 inference, postprocessing and NMS. **Reproduce speed** by `python test.py --data coco.yaml --img 640 --conf 0.25 --iou 0.45` * All checkpoints are trained to 300 epochs with default settings and hyperparameters (no autoaugmentation). * Test Time Augmentation ([TTA](https://github.com/ultralytics/yolov5/issues/303)) includes reflection and scale augmentation. **Reproduce TTA** by `python test.py --data coco.yaml --img 1536 --iou 0.7 --augment`

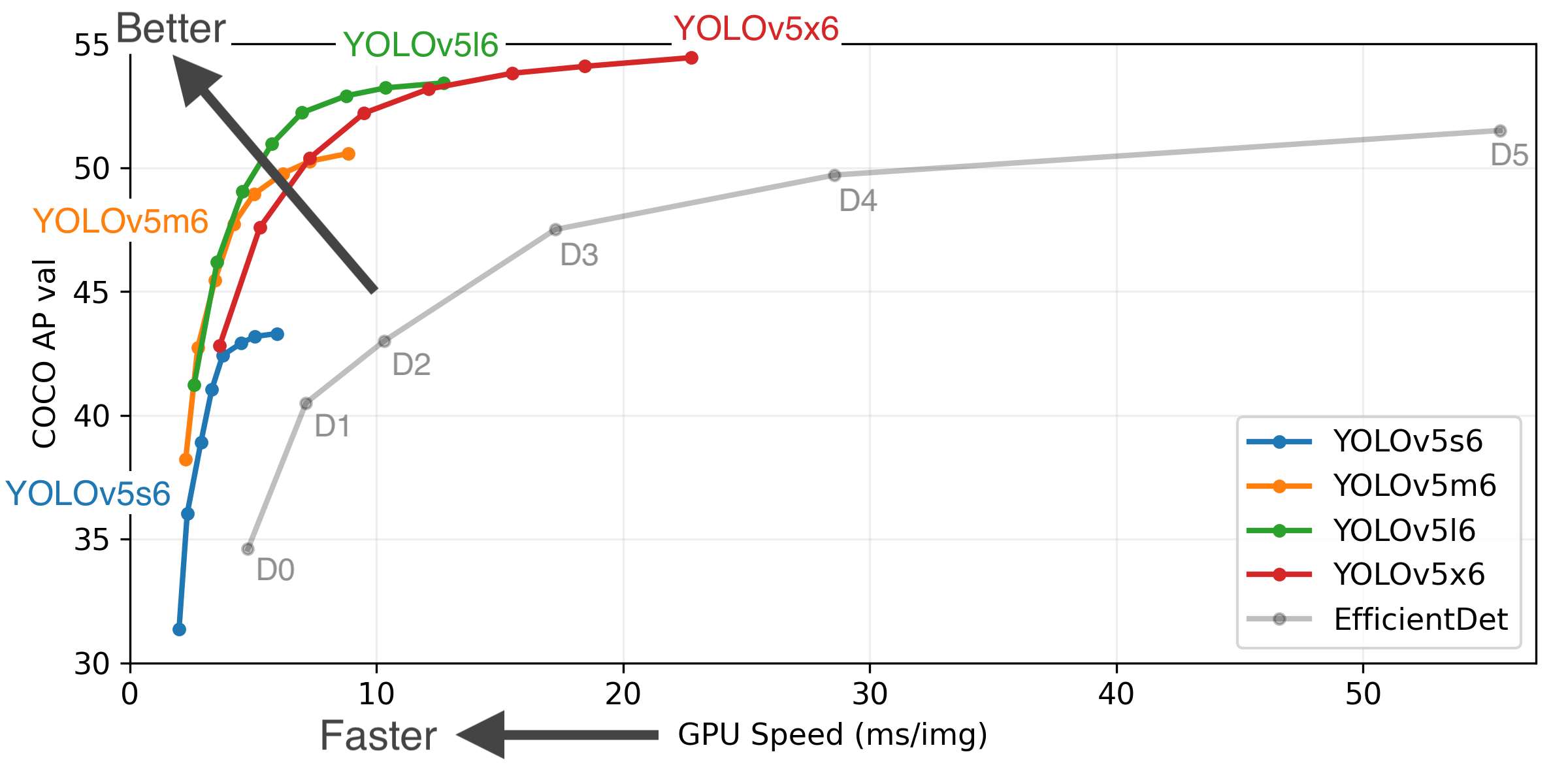

Figure Notes (click to expand)

* GPU Speed measures end-to-end time per image averaged over 5000 COCO val2017 images using a V100 GPU with batch size 32, and includes image preprocessing, PyTorch FP16 inference, postprocessing and NMS. * EfficientDet data from [google/automl](https://github.com/google/automl) at batch size 8. * **Reproduce** by `python test.py --task study --data coco.yaml --iou 0.7 --weights yolov5s6.pt yolov5m6.pt yolov5l6.pt yolov5x6.pt`Load From PyTorch Hub

This example loads a pretrained YOLOv5s model and passes an image for inference. YOLOv5 accepts URL, Filename, PIL, OpenCV, Numpy and PyTorch inputs, and returns detections in torch, pandas, and JSON output formats. See our YOLOv5 PyTorch Hub Tutorial for details.

import torch

# Model

model = torch.hub.load('ultralytics/yolov5', 'yolov5s', pretrained=True)

# Images

imgs = ['https://ultralytics.com/images/zidane.jpg'] # batch of images

# Inference

results = model(imgs)

# Results

results.print()

results.save() # or .show()

results.xyxy[0] # img1 predictions (tensor)

results.pandas().xyxy[0] # img1 predictions (pandas)

# xmin ymin xmax ymax confidence class name

# 0 749.50 43.50 1148.0 704.5 0.874023 0 person

# 1 433.50 433.50 517.5 714.5 0.687988 27 tie

# 2 114.75 195.75 1095.0 708.0 0.624512 0 person

# 3 986.00 304.00 1028.0 420.0 0.286865 27 tie

Citation

Contact

Issues should be raised directly in https://github.com/ultralytics/yolov5. For business inquiries or professional support requests please visit https://ultralytics.com or email Glenn Jocher at [email protected].